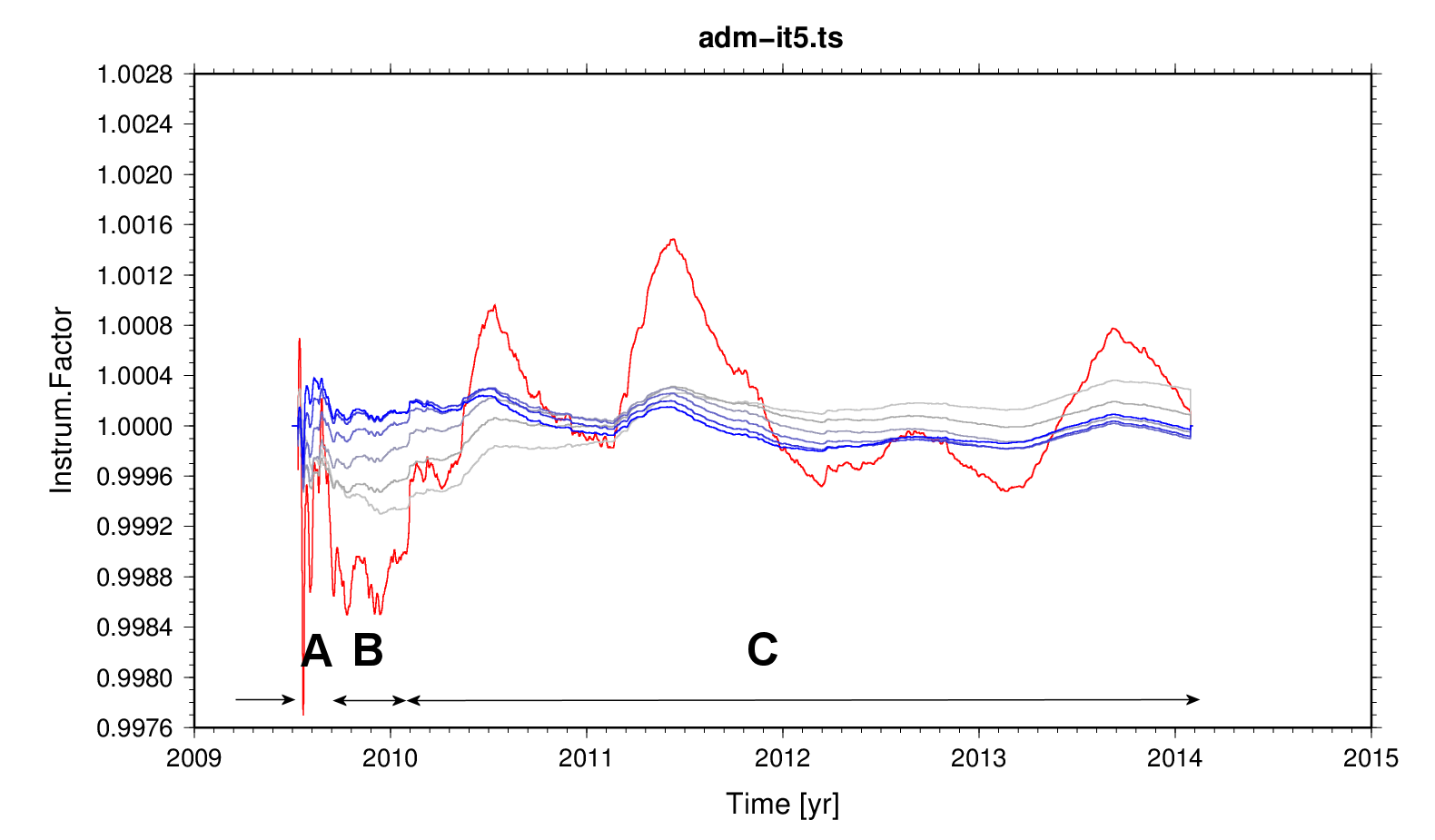

Figure 1 - Sliding-window admittance of average predicted tides w.r.t. observed tides.

Grey to blue: relative change per iteration. Red: final product. Three periods for the instrument factor can be distinguished: A - normal, B - low, C - normal again.

The behaviour of the instrument sensitivity is not well understood. Besides the annual periodicity, which might have a simple explanation.

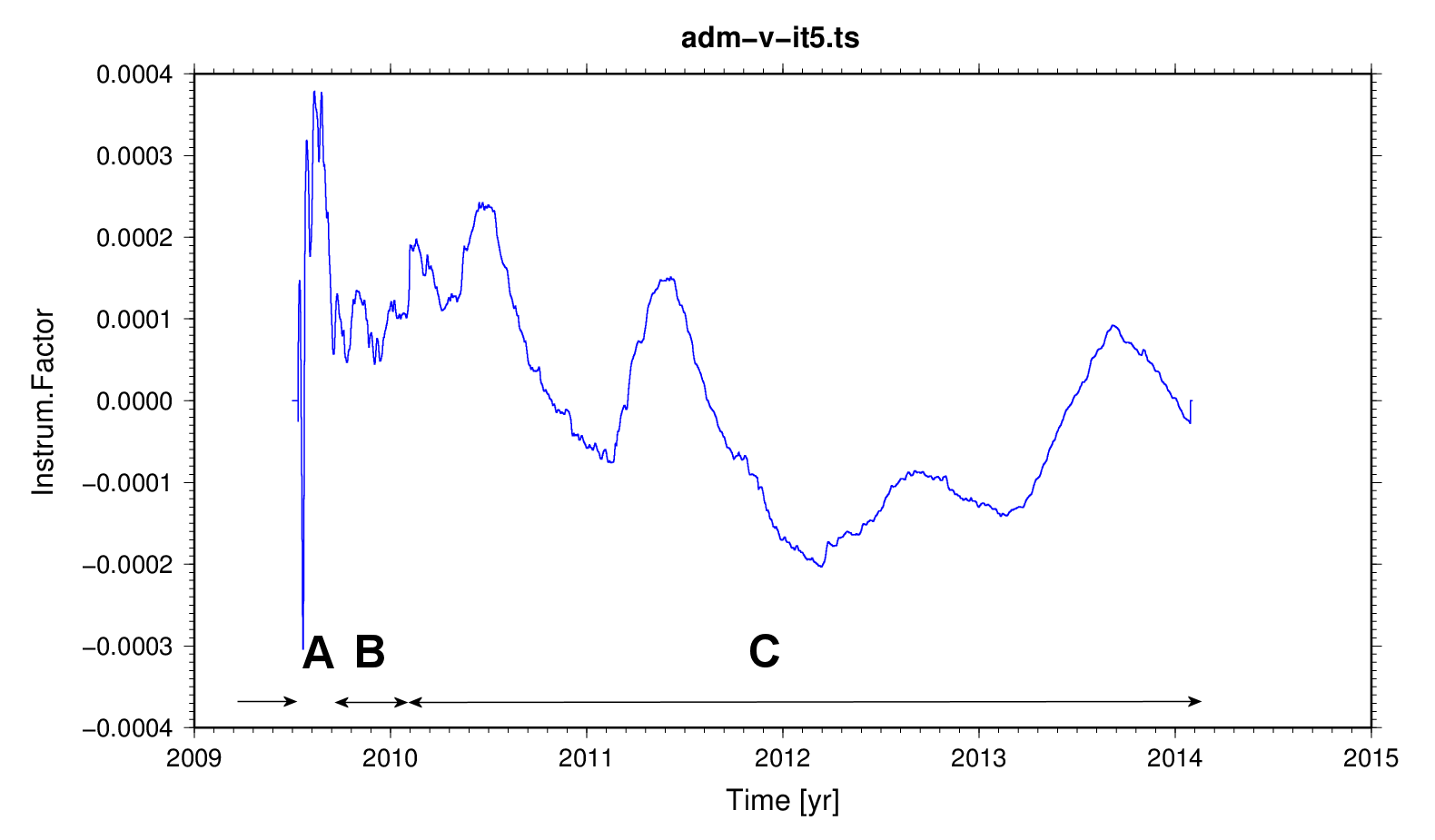

Figure 2 -

Iteration 5 with the time-derivative of the theoretical

tide. The annual periodicity might be attributed to

wave-group design and high-order tides. Period A appears

anomalous; however, consider that the annual peak in 2009

might already have been passed, and the pressure control

circuit (which appears to be the reason for the lower

sensitivity and, b.t.w., a profoundly different drift

signature) may have started to malfunction at the beginning

of period B.

Figure 2 -

Iteration 5 with the time-derivative of the theoretical

tide. The annual periodicity might be attributed to

wave-group design and high-order tides. Period A appears

anomalous; however, consider that the annual peak in 2009

might already have been passed, and the pressure control

circuit (which appears to be the reason for the lower

sensitivity and, b.t.w., a profoundly different drift

signature) may have started to malfunction at the beginning

of period B.